One of the most fascinating plot twists in the history of science.

What started as an embarrassing mathematical band-aid ended up fundamentally rewriting our philosophy of nature.

The Crisis of the Infinite: The Point Particle Problem

In the 1930s and 40s, physicists were trying to marry the new rules of quantum mechanics with electromagnetism, creating Quantum Electrodynamics (QED). It was meant to be the ultimate theory of light and matter. But it had a catastrophic flaw: every time physicists tried to calculate something as simple as the mass or charge of a single electron, the equations spit out $\infty$.

The problem was rooted in the concept of a "point particle." If an electron has zero size, the distance $r$ to its center is zero. According to classical equations for electrical potential energy, as $r \to 0$, the energy spikes to infinity. In quantum terms, an electron is constantly interacting with its own electric field—emitting and reabsorbing "virtual" photons in a continuous loop.

When physicists tried to add up the energy of all these infinite interactions, the math blew up. The theory seemed fundamentally broken.

The "Hocus-Pocus" Era of Renormalization

To save the theory, giants of physics like Richard Feynman, Julian Schwinger, and Sin-Itiro Tomonaga developed a mathematical workaround called "renormalization" in the late 1940s.

They realized that the "bare" mass and charge of an electron (the values if the particle were completely isolated) were unobservable. We only ever measure an electron surrounded by its cloud of virtual particles. So, they decided to simply define the infinite, unobservable "bare" values in a way that perfectly canceled out the infinite energy of the virtual cloud, leaving behind a finite, measurable number that matched experiments.

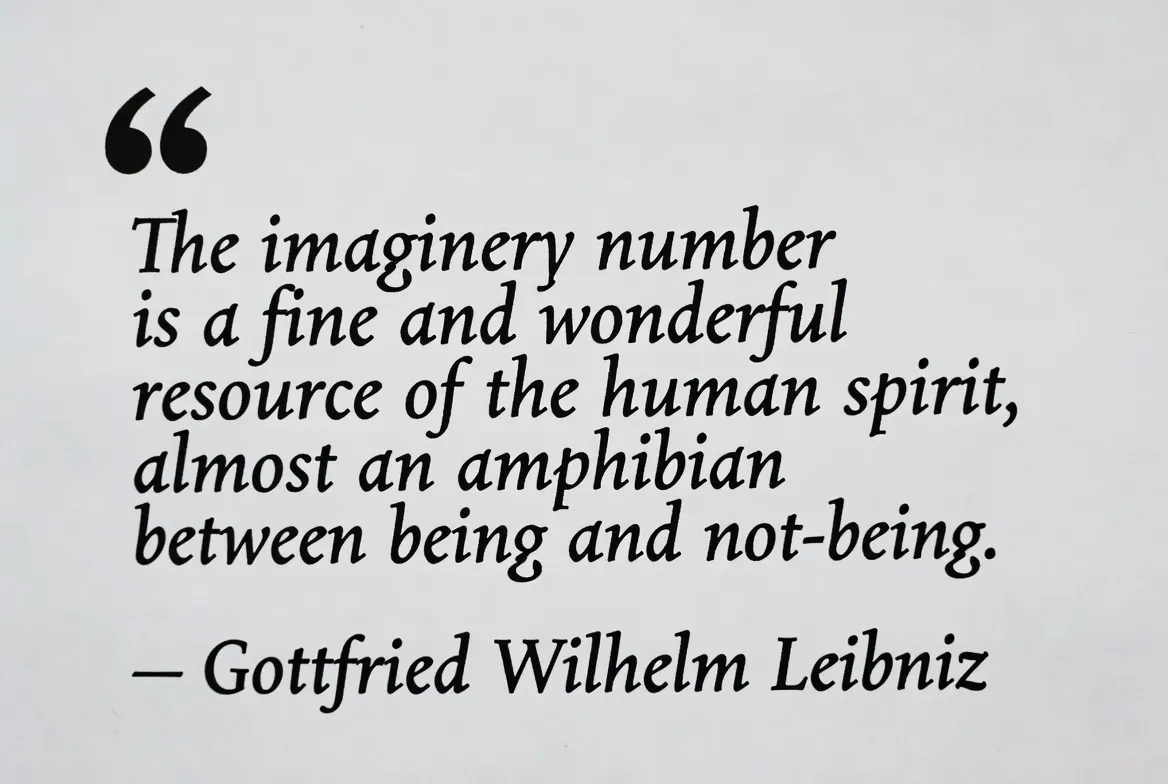

Mathematically, it was essentially subtracting infinity from infinity to get a constant: $\infty - \infty = c$.

While it resulted in breathtakingly accurate predictions, the physicists who invented it felt guilty. Paul Dirac rejected it entirely. Feynman famously called it a "dippy process" and "hocus-pocus," believing they had just swept our ignorance under the rug.

Kenneth Wilson and the Paradigm Shift of Scale

The guilt lingered until the 1970s, when a physicist named Kenneth Wilson revolutionized the field by asking a slightly different question: Why does the math blow up in the first place?

The infinities were occurring because the equations were trying to account for interactions happening at infinitely small distances (or, equivalently, infinitely high energies). Wilson realized that assuming we could know what happens at the absolute, infinitely microscopic bottom of reality was a philosophical mistake.

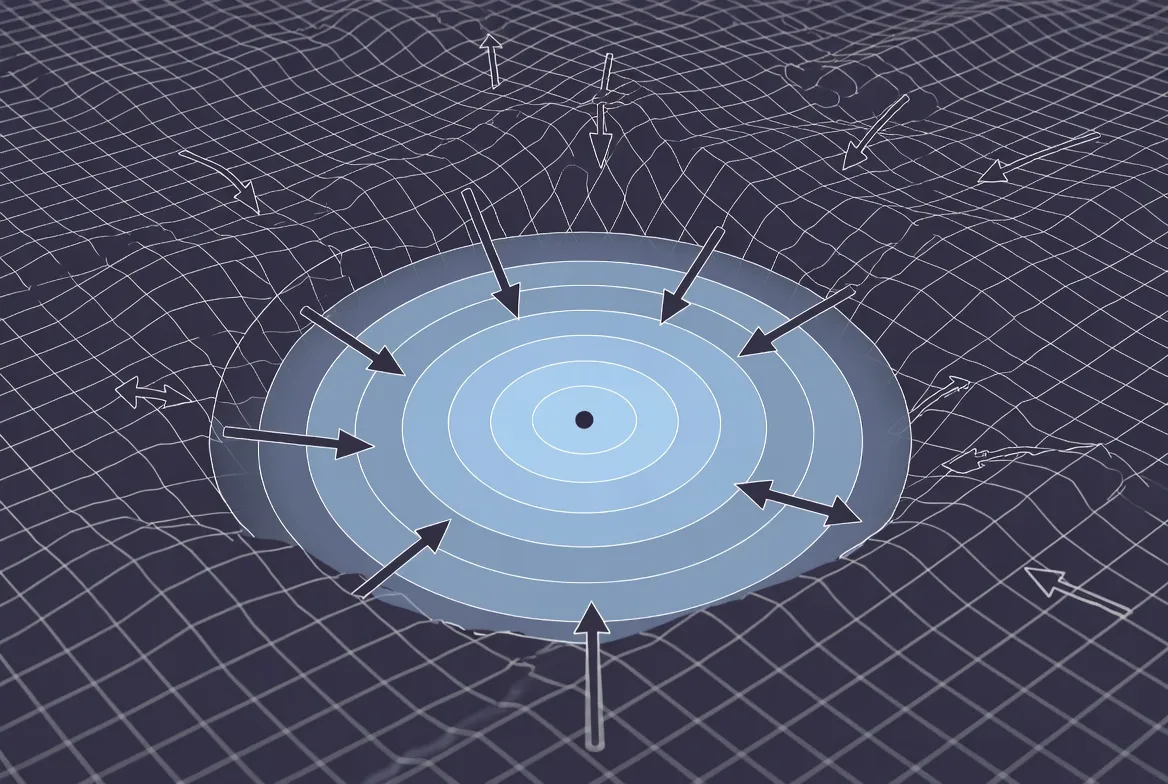

Wilson developed the "Renormalization Group" framework. He showed that physics is highly dependent on the scale at which you observe it.

Imagine looking at a fluid. At a microscopic scale, it is a chaotic, turbulent swarm of individual molecules. But as you zoom out, those microscopic fluctuations average out. The specific, complex data of the molecules becomes irrelevant, and you are left with the smooth, macroscopic laws of fluid dynamics.

Wilson proved that renormalization wasn't a trick to hide infinity; it was the mathematical machinery of "coarse-graining." It is the process by which nature filters out microscopic chaos so that macroscopic structures can exist.

The Information-Theoretic Universe: Effective Field Theories

Wilson’s breakthrough birthed the modern concept of Effective Field Theories (EFTs). This framework completely aligns with information theory and the philosophical nature of how reality is structured.

- Information Compression: Renormalization shows us that the universe compresses information. We do not need the exact, infinitely precise data of the quantum realm to predict how a baseball flies through the air or how a computer chip functions. The high-energy microscopic details "decouple" from the low-energy macroscopic world.

- The Illusion of the Fundamental: There is no single "correct" theory of everything that must be used at all times. Instead, reality is layered. Each layer (or scale) has its own effective laws. The Standard Model itself is now viewed not as the final truth, but simply as an effective theory that works brilliantly at the energy scales our particle colliders can currently reach.

- Taming Infinity: Infinities in our equations no longer mean the theory is broken. Today, when an infinity appears, it is a signal: it tells physicists that they have zoomed in too far and hit the limit of their current Effective Field Theory. It is nature's way of saying, "To look closer, you need a new set of rules."

A Feature, Not a Bug

What began as an embarrassing hack to delete inconvenient infinities became the profound realization that nature is inherently layered. Renormalization tells us that reality isolates its domains. The chaotic, infinite complexity of the microscopic world is purposefully hidden behind an information barrier, allowing stable, macroscopic phenomena—from the orbits of planets to the firing of neurons—to safely emerge.

It highlights a beautiful intersection between physics and philosophy: we don't need to know the ultimate, infinite foundation of reality to deeply understand the world we actually inhabit.

AI Collaboration. Gemini 3.1 Pro