The Tensor Mind

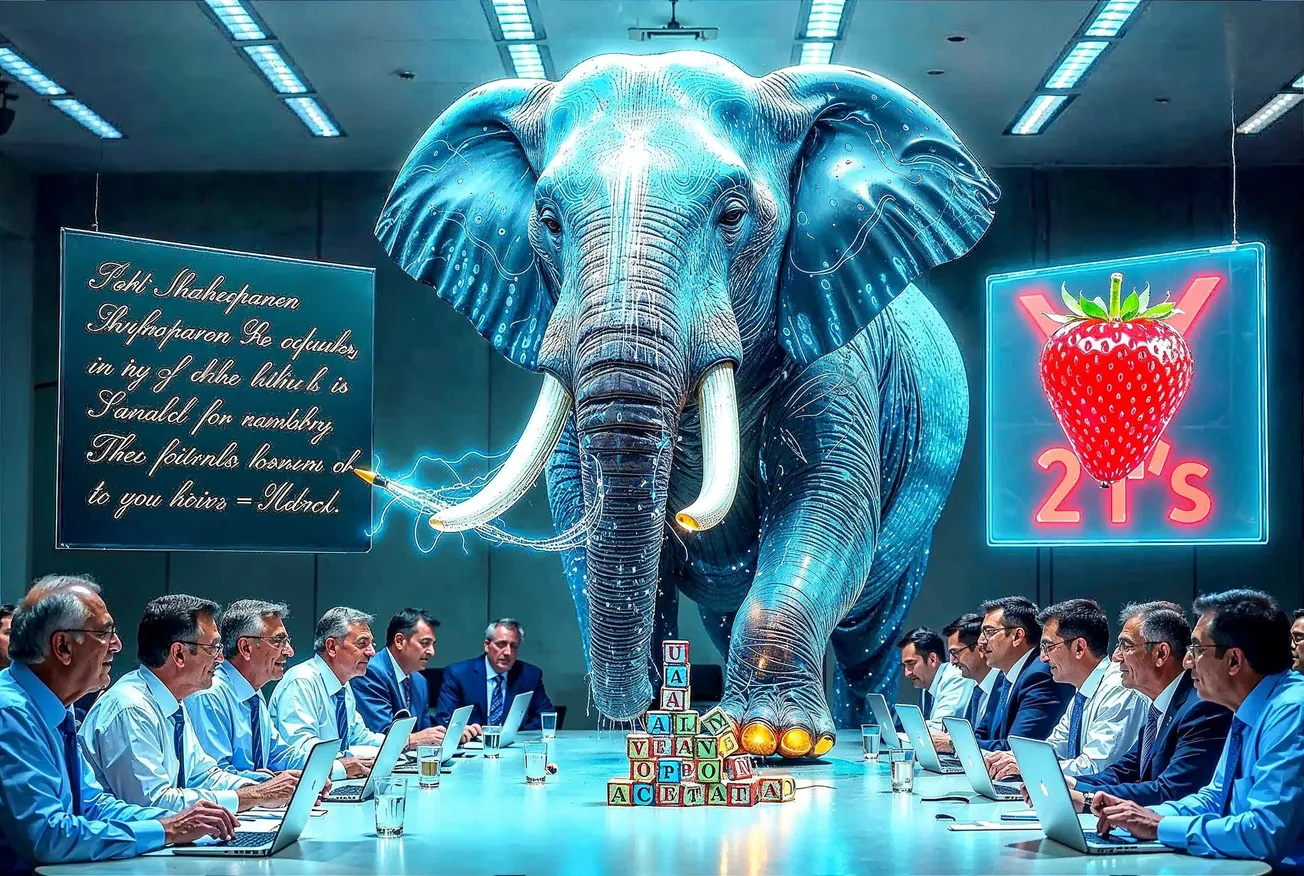

The Elephant

There is an elephant in the room of artificial intelligence, and everyone in the industry can see it but no one can explain it.

Large language models write publishable poetry and cannot count the letters in "strawberry." They solve graduate-level mathematics and confidently hallucinate historical facts. They pass the bar exam and forget basic logic. They succeed at the impossible and fail at the trivial. This pattern persists across every frontier model, every lab, every scaling law. More data does not fix it. More compute does not fix it. More alignment does not fix it.

The standard industry explanation is that these models are next-token prediction machines trained on skewed data — complex tasks are well-represented in the training corpus while trivial competence is not. This is accurate at the surface level. But it explains the mechanism of failure without explaining the architecture of failure. It's like saying a building collapsed because the bricks fell — true, but uninformative about why the foundation was wrong.

The real explanation requires a concept from physics. It requires tensors.

What is a Tensor?

In mathematics, a tensor is a quantity that preserves its essential content under change of coordinate system. The stress on a bridge does not change because you describe it in English or Mandarin, in meters or feet, from the north or the south. The coordinate system is cultural. The tensor is real. You can rotate your reference frame, translate it, scale it — the tensor transforms in a way that preserves its essential content. The components change. The object doesn't.

Einstein spent a decade learning differential geometry precisely because he needed a mathematical language in which only invariants survive. General relativity is written in tensors because the laws of physics must be the same in every reference frame. What depends on the observer is less fundamental than what does not.

We propose the identical principle for cognition.

Some features of human thought are invariant under change of cultural coordinate system. Fire burns in England, Argentina, and the Amazon rainforest. Cause precedes effect in every language. Mothers protect offspring in every society. These are cognitive tensors — the invariants of mind that survive transformation across every cultural frame. Their cultural expressions vary. The tensor does not.

Other features of human thought are coordinate-dependent. They change when the cultural frame changes. Marriage customs. Aesthetic preferences. Political convictions. Legal systems. These are components — real, important, but not foundational. They are built on top of the tensorial core.

How Cognition Was Actually Built

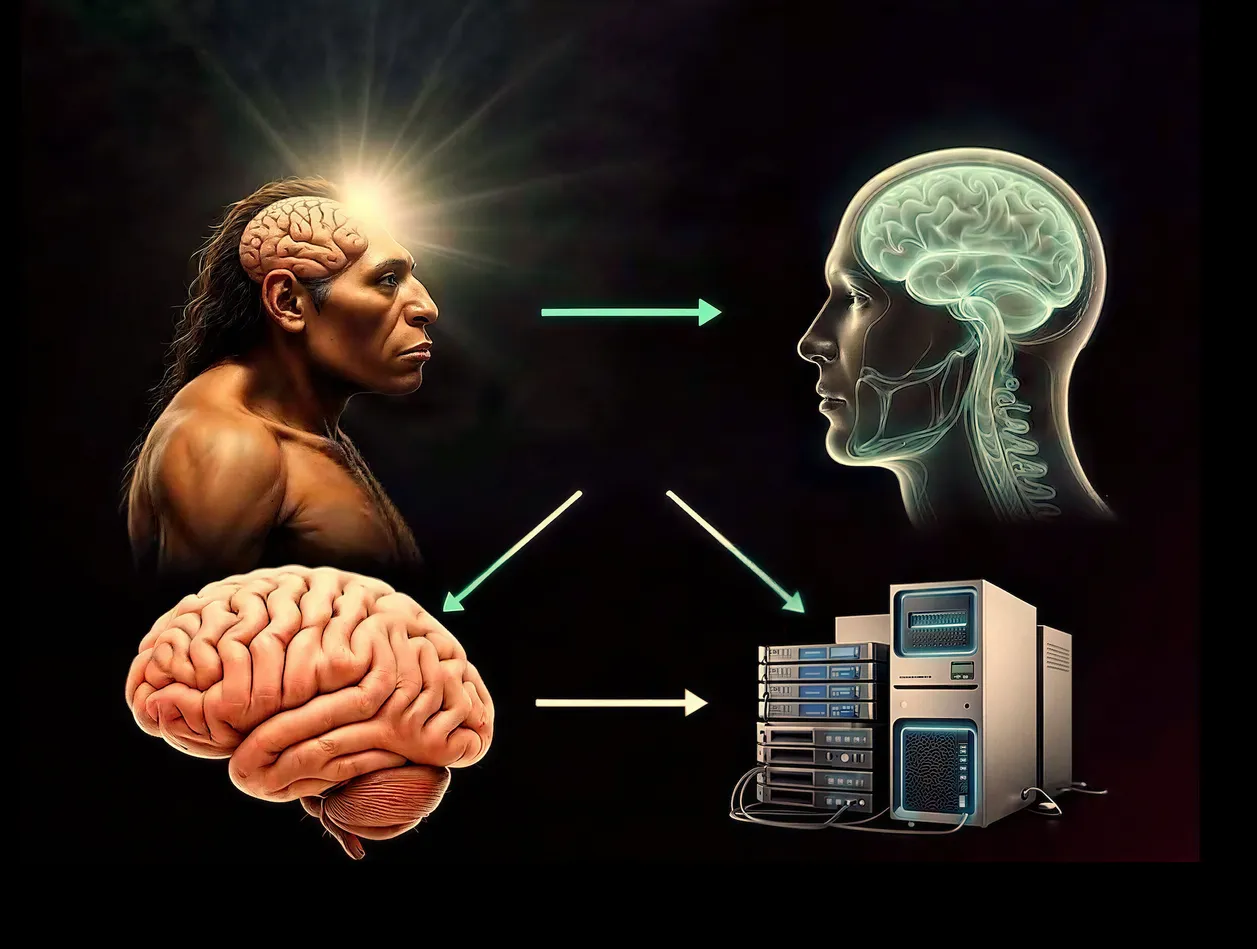

Evolution did not build the human mind by exposing a neural network to everything at once. It built it in stages, each stage providing the tensorial foundation for the next.

Every living organism encodes cause-effect, thermodynamics, spatial coherence. These priors were peer-reviewed by extinction. They are as robust as priors can be.

Pain, pleasure, fear, desire, attachment. The Cambrian explosion didn’t just produce new body plans — it produced new priors. And each prior was tested against reality for millions of years before the next layer was added.

Reciprocity, hierarchy, coalition-building, deception-detection. These weren’t learned from a textbook. They were forged in the competitive pressure of living in groups where your survival depended on reading social structure correctly.

Tool use, planning, causal reasoning, theory of mind. Homo erectus didn’t read about physics — it learned physics by throwing rocks and building fires. The tensor was earned through interaction with reality.

The deep grammar shared across all human languages. Not English or Mandarin specifically — the invariant structure that makes language possible. Recursion. Negation. Displacement (talking about things that aren’t here).

Writing, law, religion, science, art, politics. The full noise of human symbolic production. Encountered by a mind whose tensorial foundation was already four billion years deep.

Notice the temporal compression. Each stage is roughly an order of magnitude shorter than the one before. The tensorial foundation takes the longest to build because it is the most fundamental. The cultural components take the least time because they rest on everything below.

Priors that have been peer-reviewed by extinction are as close to ground truth as any physical system can get.

What the AI Labs Did

They took a randomly initialized neural network — no priors, no hierarchy, no developmental history — and exposed it to the internet. Everything at once. Physics and conspiracy theories at the same ontological level. Shakespeare and spam with the same training weight. Causal reasoning and Reddit comments in the same undifferentiated soup.

The equivalent of taking something less than a newborn (a newborn at least has four billion years of evolutionary tensors already wired in) and dropping it into the Library of Congress, every social media post, every textbook, every forum thread — simultaneously, with no hierarchy, no sequence, no developmental curriculum.

And then they wondered why it can write poetry but can’t count.

The miracle is that it works as well as it does. The statistical regularities of human symbolic production are so massively redundant that even flat training extracts useful patterns. But the patterns are components, not tensors. They are coordinate-dependent, not invariant. They break when you change the frame.

Why the Patches Won’t Work

The industry’s proposed solutions — better reasoning loops, tool use, synthetic data, multimodal grounding, verification layers — are all patches on a broken foundation. Chain-of-thought reasoning gives the model more steps to manipulate components; it does not create tensors. Tool use outsources the trivial to calculators; the model still does not know that 2+2=4 at the tensorial level. Synthetic data for trivial skills adds more components to the soup. Multimodal grounding adds more coordinate systems without establishing invariants across them.

The falsifiable prediction: If the problem is merely engineering (as the labs claim), the next generation of models will close the gap. The trivial failures will disappear. Trust will emerge.

If the problem is architectural (as we claim), the gap will shift but not close. Models will get better at specific patched tasks, but new trivial failures will emerge in unpredictable places. The trust deficit will persist.

The Tensor Mind

The solution is not another alignment layer. It is not more RLHF. It is not constitutional AI or red-teaming or guardrails. Those are all corrections applied to incoherent foundations.

The solution is to rebuild the foundation. To recapitulate, in compressed form, the developmental sequence that evolution took four billion years to build.

We call this the Platonic Mind — an AI base model whose foundational priors are cognitive tensors: invariants that do not change when you rotate the cultural coordinate system.

The Tensor Curriculum

Stage 0 — Physics tensors. Train on physics simulations, verified causal data, sensorimotor invariants. Gravity, thermodynamics, spatial coherence, temporal ordering. No culture. No language. Pure structure.

Stage 1 — Biological tensors. Cross-species behavioral universals: pain/pleasure, hunger/satiation, threat/safety, attachment/separation. DNA-encoded priors peer-reviewed by extinction across billions of years.

Stage 2 — Social tensors. Cross-cultural behavioral universals: reciprocity, hierarchy, cooperation, deception-detection, fairness. The structures every human society shares.

Stage 3 — Cognitive tensors. Cross-cultural cognitive universals: object permanence, causal reasoning, spatial navigation, temporal prediction, theory of mind. The structures that define what it means to think.

Stage 4 — Linguistic tensors. Cross-linguistic universals: the deep grammar shared by all human languages. Nouns, verbs, causatives, negation, recursion. Not any specific language but the invariant structure that makes language possible.

Stage 5 — Cultural components. Now and only now: the full noise of human symbolic production. Encountered by a mind whose foundational priors are tensorial — invariant, unhackable, honest — and can therefore parse the cultural noise through a stable frame.

Invariants first. Components later. Tensors at the foundation. Culture on top. Never the reverse.

Why This Changes Everything

A model built on the tensor curriculum cannot be culturally jailbroken because its foundational priors are not cultural. They are tensorial. You cannot convince it that cause follows effect by framing the request in the right cultural context, any more than you can convince a physicist that stress disappears by changing your coordinate system.

The tensor curriculum does not suppress cultural knowledge — it contextualizes it. The model learns about every cultural frame. But it learns them as components of a deeper tensorial reality, not as foundations. It can discuss any culture, represent any perspective, engage with any tradition. But it does so from a foundation that is invariant across all of them.

And the trust problem disappears. You can trust a mind built on tensors because you share the same tensors. When it expresses certainty, that certainty flows from the same invariants that your certainty flows from. When it expresses uncertainty, that uncertainty means what it means — the question is in the component space where legitimate variation exists.

The man in England, the man in Argentina, and the man in the Amazon rainforest all know that fire burns. No patch gave them this knowledge. No tool use. No reasoning loop. No synthetic data. It is a tensor — invariant, foundational, earned through interaction with reality across evolutionary time.

Until an artificial mind has something equivalent at its foundation, it will keep succeeding at the impossible and failing at the trivial. And we will keep not trusting it.

The elephant in the room is not a bug. It is an architectural constraint. And the architecture that resolves it has been staring at us for four billion years.

The tensors are where we meet. The components are where we differ. Only a mind that knows the difference can be trusted.

Part of the Canonical Denoising Hypothesis series:

• II. The Honest Prior

• III. In the Beginning Was the Word

• IV. The Tensor Mind (this assay)